Subtotal $0.00

Introduction

Artificial intelligence is transforming the way businesses operate. Many companies are now using AI agents to automate customer support, analyze data, manage marketing campaigns, and improve business operations. These intelligent systems can process large amounts of information quickly and help organizations make better decisions.

However, as businesses adopt AI technology, an important question arises: How does AI affect data privacy?

AI agents often handle sensitive information such as customer details, financial records, personal communication, and behavioral data. If this information is not protected properly, it can create serious privacy and security risks.

For businesses, protecting customer data is not only an ethical responsibility but also a legal requirement in many countries.

In this detailed guide, we will explain how AI agents interact with data, the privacy risks involved, and what businesses must do to protect sensitive information while using AI technology responsibly.

What Are AI Agents?

Before understanding data privacy concerns, it is important to understand what AI agents are.

AI agents are intelligent software systems designed to perform tasks automatically using artificial intelligence technologies such as machine learning, natural language processing, and predictive analytics.

These systems can interact with users, analyze data, and perform complex tasks without constant human involvement.

AI agents are commonly used for:

- customer support automation

- marketing automation

- sales and lead generation

- business analytics

- workflow management

Because these systems process large amounts of data, data privacy becomes a critical issue.

Why Data Privacy Matters in AI Systems

Data privacy is one of the most important topics in modern technology.

Businesses collect and store a large amount of data from customers, employees, and partners. AI agents often analyze this data to generate insights and automate processes.

Protecting this information is essential for several reasons.

Protecting Customer Trust

Customers share personal information with businesses.

This information may include:

- contact details

- payment information

- purchase history

- online behavior

If businesses fail to protect this data, customer trust can be lost.

Legal and Regulatory Requirements

Many countries have strict data protection laws.

Businesses must follow regulations that control how data is collected, stored, and used.

Failure to comply with these laws can lead to heavy penalties.

Preventing Cybersecurity Threats

Sensitive data can attract cybercriminals.

Strong privacy practices help prevent data breaches and cyber attacks.

How AI Agents Use Business Data

AI agents rely on data to function effectively.

Understanding how these systems use data helps businesses identify potential privacy risks.

AI systems typically process several types of data.

1. Customer Data

AI agents often analyze customer information to improve services.

Examples include:

- customer preferences

- browsing history

- purchase behavior

- communication records

This data helps businesses personalize experiences.

2. Operational Data

AI systems analyze internal business data to optimize operations.

This may include:

- sales reports

- marketing performance

- supply chain information

AI agents use this data to generate insights and recommendations.

3. Behavioral Data

AI systems can analyze behavioral patterns to predict customer needs.

Examples include:

- website activity

- product interactions

- search behavior

This allows businesses to improve customer experiences.

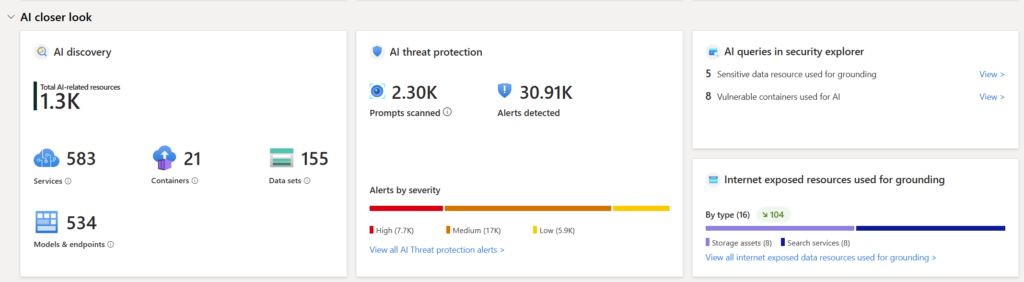

Data Privacy Risks Associated with AI Agents

While AI agents offer many benefits, they also introduce several privacy risks.

Understanding these risks helps businesses implement better safeguards.

1. Unauthorized Data Access

AI systems may store or process sensitive information.

If proper security controls are not implemented, unauthorized users may gain access to this data.

Possible causes include:

- weak access controls

- poor authentication systems

- insecure databases

This can lead to serious privacy breaches.

2. Data Breaches

Data breaches occur when confidential information is exposed or stolen.

AI systems that store large datasets may become targets for cyber attacks.

Consequences of data breaches include:

- financial losses

- legal penalties

- reputational damage

Businesses must implement strong security practices to prevent such incidents.

3. Excessive Data Collection

Some AI systems collect more data than necessary.

Collecting unnecessary data increases privacy risks.

Businesses should follow the principle of data minimization, which means collecting only the data required for a specific purpose.

4. Lack of Transparency

Customers often do not know how their data is used by AI systems.

This lack of transparency can lead to ethical concerns and regulatory issues.

Businesses must clearly communicate how customer data is processed.

5. AI Bias and Ethical Issues

AI systems learn from data.

If the data used for training contains bias, the AI system may produce unfair outcomes.

This can create ethical and legal challenges.

For example, biased AI systems may affect:

- hiring decisions

- loan approvals

- marketing targeting

Businesses must ensure fairness and transparency in AI systems.

Data Protection Regulations Businesses Should Know

Many governments have introduced laws to protect personal data.

Businesses using AI agents must comply with these regulations.

Some important data protection frameworks include:

General Data Protection Regulation (GDPR)

This regulation applies in the European Union and protects personal data privacy.

It requires businesses to obtain user consent before collecting data.

California Consumer Privacy Act (CCPA)

This law provides data privacy rights to residents of California.

Consumers can request information about how their data is used.

Other Global Data Protection Laws

Many countries now have data protection regulations that businesses must follow.

Companies must ensure compliance with local laws when handling data.

Best Practices for Protecting Data Privacy with AI Agents

Businesses can reduce privacy risks by implementing strong data protection practices.

Below are several important strategies.

1. Implement Strong Data Security

Businesses must protect data using advanced security measures.

Examples include:

- encryption of sensitive data

- secure data storage systems

- multi-factor authentication

- regular security audits

These practices help prevent unauthorized access.

2. Follow Data Minimization Principles

Businesses should collect only the data required for specific purposes.

This reduces privacy risks and simplifies compliance with regulations.

3. Ensure Transparency with Customers

Customers should understand how their data is used.

Businesses should provide clear privacy policies explaining:

- what data is collected

- how it is used

- how it is protected

Transparency helps build trust.

4. Monitor AI Systems Regularly

AI systems should be monitored to detect unusual behavior or potential security threats.

Regular monitoring helps identify issues early.

5. Train Employees on Data Privacy

Employees must understand how to handle data responsibly.

Organizations should provide training on:

- privacy regulations

- cybersecurity practices

- ethical AI usage

Role of Ethical AI in Data Privacy

Ethical AI development is essential for protecting data privacy.

Ethical AI focuses on responsible design and usage of artificial intelligence systems.

Key principles of ethical AI include:

Accountability

Businesses must take responsibility for how AI systems use data.

Fairness

AI systems should treat all individuals fairly without discrimination.

Transparency

Users should understand how AI systems operate.

Privacy Protection

AI systems must respect user privacy and protect sensitive information.

Future of AI and Data Privacy

As AI technology continues to evolve, data privacy will become even more important.

Future developments may include:

- stronger AI regulations

- improved privacy-preserving technologies

- advanced encryption techniques

- better AI transparency tools

Businesses that prioritize privacy will gain greater trust from customers and regulators.

Conclusion

AI agents are becoming essential tools for modern businesses. They help automate tasks, analyze data, and improve decision-making. However, these benefits come with significant responsibilities related to data privacy.

Businesses must understand how AI systems use data and implement strong privacy protections. Protecting customer information is not only a legal obligation but also a critical factor in building trust.

By following best practices such as strong security measures, transparent policies, and ethical AI principles, businesses can safely use AI agents while protecting sensitive data.

As AI adoption continues to grow, organizations that prioritize data privacy will be better positioned for long-term success.

Read More Blog–Are AI Agents Safe? Risks & Ethical Concerns Explained

FAQs

1. What is data privacy in AI systems?

Data privacy refers to protecting personal and sensitive information processed by AI systems.

2. Why is data privacy important for businesses using AI?

Data privacy helps protect customer information, maintain trust, and comply with legal regulations.

3. Can AI agents misuse customer data?

AI agents themselves do not intentionally misuse data, but poor system design or security vulnerabilities can create risks.

4. How can businesses protect data when using AI?

Businesses can implement encryption, strong security systems, transparency policies, and regular monitoring.

5. Are there laws regulating AI and data privacy?

Yes. Many countries have regulations such as GDPR and CCPA that govern how businesses handle personal data.