Subtotal $0.00

Artificial Intelligence is becoming part of our daily life. We use AI assistants for chatting, searching, writing, and even managing business tasks. But many people do not think about one important thing — data privacy. Every time you use an AI assistant, you share information. This may include personal data, business details, or sensitive information.

In 2026, data privacy is one of the biggest concerns in the AI world. Governments are making new rules, and users are becoming more aware. If you are a startup founder, business owner, or even a normal user, understanding AI assistant data privacy policies is very important.

In this guide, you will learn everything in simple language. We will explain how AI assistants use data, risks, laws, and how you can protect your information safely.

What Is AI Assistant Data Privacy?

Before going deeper, it is important to understand the basic meaning.

AI assistant data privacy refers to how your data is collected, stored, used, and protected when you interact with AI systems.

When you use an AI assistant, it may collect:

Your messages

Search queries

Personal details

Business data

Behavior patterns

Data privacy ensures that this information is handled safely and responsibly.

Why Data Privacy Is Important in AI Assistants

AI assistants are powerful tools, but they depend on data.

Without proper privacy policies, your data can be misused.

Data privacy is important because:

It protects personal information

It prevents data misuse

It builds user trust

It ensures legal compliance

It protects businesses from risks

In simple words, without data privacy, AI cannot be trusted.

How AI Assistants Collect Data

To understand AI data privacy, you must know how data is collected.

AI assistants collect data in multiple ways.

Types of Data Collection

AI systems gather information from different sources.

User input data

Device data

Usage behavior

Location data

Third-party integrations

For example, when you type a question, the AI stores that input to process and improve responses.

How AI Assistants Use Data

After collecting data, AI assistants use it for different purposes.

Common Uses of Data

AI systems use data to improve performance and user experience.

Improve responses

Train AI models

Personalize results

Automate tasks

Analyze trends

For example, if you ask similar questions repeatedly, the AI may provide faster and better answers.

Types of Data in AI Privacy

Not all data is the same. Some data is more sensitive.

Categories of Data

Understanding data types helps in better protection.

Personal data

Sensitive data

Business data

Public data

Sensitive data includes financial details, passwords, or confidential business information.

AI Data Privacy Risks

AI assistants are helpful, but they also have risks.

Common Risks in AI Privacy

Without proper security, data can be exposed.

Data leaks

Unauthorized access

Data misuse

Bias in data

Over-collection of data

These risks can affect both individuals and businesses.

AI Assistant Data Privacy Laws (2026)

Governments are creating strict rules to protect data.

Major Data Privacy Regulations

Different countries have their own laws.

GDPR (Europe)

CCPA (California)

DPDP Act (India)

These laws ensure that companies handle data responsibly.

They focus on:

User consent

Data protection

Transparency

User rights

Key Principles of AI Data Privacy Policies

Every AI system should follow some basic privacy principles.

Core Principles

These principles ensure safe data handling.

Transparency

User consent

Data minimization

Security

Accountability

Companies must clearly explain how they use data.

AI Assistant Data Security Measures

To protect data, AI systems use security measures.

Common Security Practices

Strong security reduces risks.

Encryption

Access control

Secure storage

Regular audits

Monitoring systems

These methods help prevent data breaches.

How Businesses Should Handle AI Data Privacy

If you are using AI in your business, you must follow proper policies.

Best Practices for Businesses

Businesses must protect customer data.

Create clear privacy policies

Use secure tools

Limit data collection

Train employees

Follow legal rules

This builds trust with customers.

AI Privacy Challenges for Startups

Startups face unique challenges in data privacy.

Common Challenges

Limited budget

Lack of expertise

Rapid scaling

Complex regulations

Despite challenges, startups must prioritize privacy.

How to Protect Your Data While Using AI Assistants

Users must also take responsibility.

Simple Safety Tips

You can protect your data by following these steps.

Avoid sharing sensitive information

Use trusted platforms

Read privacy policies

Enable security settings

Monitor data usage

Small steps can reduce big risks.

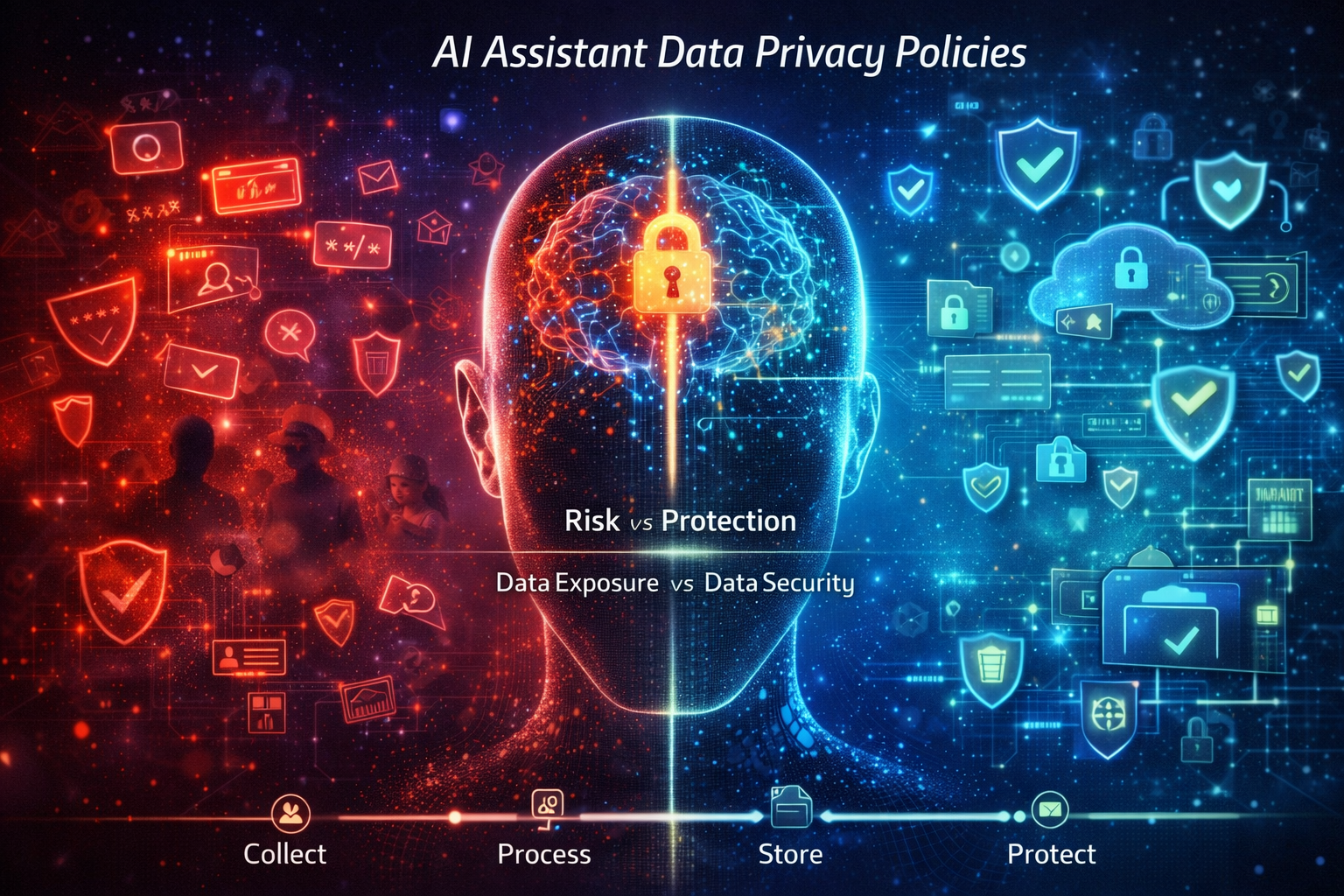

AI Data Privacy vs Data Security

Many people confuse privacy and security.

Data privacy is about how data is used.

Data security is about protecting data from threats.

Both are important.

Ethical Concerns in AI Data Privacy

AI is not just about technology. It also involves ethics.

Key Ethical Issues

Bias in AI

Lack of transparency

Misuse of data

Surveillance concerns

Ethical AI ensures fairness and trust.

Future of AI Data Privacy (2026 and Beyond)

AI is evolving, and so are privacy rules.

Future Trends

Stronger regulations

Better encryption

AI-driven security

User-controlled data

Transparent AI systems

Data privacy will become a major competitive advantage.

Why Data Privacy Matters for Startup Founders

Startup founders must understand privacy.

Business Impact

Build customer trust

Avoid legal penalties

Improve brand reputation

Ensure long-term growth

Privacy is not a cost. It is an investment.

Common Mistakes to Avoid

Many people make mistakes in handling AI data.

Mistakes to Avoid

Ignoring privacy policies

Sharing sensitive data

Using untrusted tools

Not updating security

Over-collecting data

Avoiding these mistakes can save you from problems.

How to Create an AI Data Privacy Policy

If you are building an AI product, you need a policy.

Steps to Create Policy

Define data collection

Explain data usage

Mention user rights

Add security measures

Ensure legal compliance

Keep the policy simple and transparent.

Conclusion

AI assistant data privacy policies are very important in 2026. As AI becomes part of daily life, protecting data becomes a priority.

Understanding how AI collects and uses data helps you stay safe. Businesses must follow privacy rules to build trust. Users must also be careful while sharing information.

The future of AI depends on trust. And trust depends on data privacy.

If you use AI wisely and follow proper policies, you can enjoy its benefits without risks.

Read More Blog–AI Agents for Lead Generation: Step-by-Step Setup

Frequently Asked Questions (FAQs)

1. What is AI assistant data privacy?

It is the process of protecting user data when interacting with AI systems.

2. Is AI safe for personal data?

Yes, if proper security and privacy measures are followed.

3. What data do AI assistants collect?

They collect user inputs, behavior, and sometimes personal data.

4. How can I protect my data?

Avoid sharing sensitive information and use trusted platforms.

5. Are there laws for AI data privacy?

Yes, laws like GDPR, CCPA, and DPDP Act regulate data usage.

6. Can AI misuse data?

Yes, if not properly controlled.

7. Why is data privacy important?

It protects users, builds trust, and ensures legal compliance.